Today my boyfriend’s iphone screen cracked, not spontaneously – he dropped it, but the cracked screen reminded me of one depressing fact. The fact, that despite research into Natural User Interfaces and embodied cognition, all these smartphones and tablets are just pictures under glass. Our interactions with them are funneled mostly through one or two fingers. In fact, I’d argue this is a step back from using a mouse and keyboard. Just try coding with a touchscreen keyboard! I dare you. If you haven’t seen Bret Victor’s illuminated rant about Pictures Under Glass, you can read it here.

Olympic Grace for the Rest of Us

With the inspiring Olympic displays of the power and grace of the human body all around us, it’s dreadful that we confine the human body that is capable of this:

to interactions like this:

No Olympic grace there, and the sadder thing is, that poor kid is probably looking at many years of pointing and sliding to come.

With an entire body at your command, do you seriously think the Future Of Interaction should be a single finger?”

– Bret Victor

From Bret Victor‘s rant, “The next time you make breakfast, pay attention to the exquisitely intricate choreography of opening cupboards and pouring the milk — notice how your limbs move in space, how effortlessly you use your weight and balance. The only reason your mind doesn’t explode every morning from the sheer awesomeness of your balletic achievement is that everyone else in the world can do this as well. With an entire body at your command, do you seriously think the Future Of Interaction should be a single finger?”

So what are some interfaces that truly allow us to interact naturally with our environment and still benefit from technology?

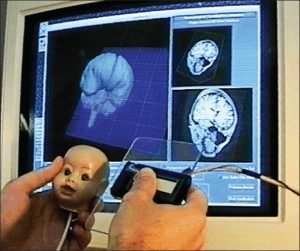

Brain Imaging Made Easy

In this 1994 paper, Ken Hinckley, Randy Pausch and their colleagues detail a system using an acrylic plane and a doll’s head to help neurosurgeons interact with brain imaging software. The 3D planes that the neurosurgeons need to view are difficult to navigate with a mouse or keyboard, but very intuitive with an acrylic “plane” and a “model” of the head.

From the paper, “All of the approximately 15 neurosurgeons who have tried the interface were able to “get the hang of it” within about one minute of touching the props; many users required considerably less time than this.” Of course, they are neurosurgeons, but I’m guessing it’s very unlikely that they would get the hang of most keyboard and mouse interfaces to this system in about a minute.

This interface was designed in the 90’s, and we’re still stuck on touchscreens!

Blast Motion’s Sensors

Blast Motion Inc. creates puck-shaped wireless devices that can be embedded into golf clubs and similar sporting equipment. The pucks can collect data about the user’s swing, speed or motion in general. The data is useful feedback to help the user assess and improve their swing.

I like that the interface here is a real golf club, likely the user’s own club, rather than some electronic measuring device. The puck seems small and light enough not to interfere with the swing, and will soon be embedded into the golf club rather than sold as a separate attachment. I’m interested to see how their product fares when it comes out.

But I Can’t Control my Computer with A Golf Club

Yes, yes, neither of these interfaces can be extrapolated in a general way, but maybe this is a limitation we should be moving away from. Why are we shoehorning a touchscreen interface onto everything? Perhaps we need to look at the task at hand and design the best interface for it, not the best touchscreen UI. The ReacTable is a great example of completely new interface designed for the specific task of creating digital music. (Of course, the app is now available for iOS and Android – back to the touchscreen!) Similarly, the Wii and Kinect have made strides in allowing natural input, but are only recently being considered for serious applications. I really hope that natural interfaces start becoming the norm rather than the exception.

Have you struggled with Pictures Under Glass interfaces for your tasks?

Have you encountered any NUIs (Natural User Interfaces) that you enjoyed (or didn’t)?

Let me know in the comments below.